As digital technologies increasingly underpin public-purpose service delivery across digital health, smart cities, agriculture, and education, ethical considerations are no longer peripheral to system design. Artificial intelligence (AI) interventions operate within complex ecosystems of data infrastructures, devices, institutions, frontline workers, and end-users; these systems expected to interoperate across domains while remaining reliable, accountable, and socially inclusive.

In this context, we at the AI Innovation & Inclusion Initiative (A4I) initiative, in collaboration with Center for Internet of Ethical Things (CIET), convened a Knowledge Exchange Workshop on “Ethics in IoT and AI Interventions” on 10 December 2025. The workshop was envisioned as a platform to support the development of ethical AI solutions across domains by integrating ethical reasoning directly into system design, deployment, and governance practices. The morning sessions focused on knowledge exchange led by Dr Deepa Austin and Swati Ganesan, followed by an interactive session on Responsible AI using a game-based approach, facilitated by Dr Carolyn Kavita Tauro. The goal was to move beyond abstract principles and allow participants to experience the practical tensions that arise when designing AI systems for real-world settings.

A natural starting point was to turn this lens inward with the aim to strengthen ethical awareness within the A4I team. Participants included our developers who work on applied AI solutions, and researchers who study the socio-technical nuances associated with these solutions in the domains of health, education and accessible education. The emphasis was not only on ethical principles, but on building an understanding to support decision-making in real-world, operational settings.

Accounting for Complexity in AI Ecosystems

A central framing of the workshop was the recognition that contemporary AI ecosystems are increasingly interconnected, interoperable, and cross-domain in nature. These systems enable new forms of functional integration, but also introduce layered dependencies between data pipelines, algorithms, devices, institutional workflows, and policy environments.

The workshop highlighted two major sources of ethical complexity. First, the dynamic nature of contextual interactions — involving multiple stakeholders, evolving operational conditions, and institutional constraints — makes it difficult to anticipate system behaviour in advance. Second, public-purpose deployments are inherently value-sensitive, as they shape access to services, distribution of benefits, and accountability mechanisms.

This perspective aligns closely with A4I’s socio-technical orientation: AI systems cannot be assessed in isolation from the social, organisational, and infrastructural environments in which they operate.

Session 1: A Framework for Ethical Design: Four Core Dimensions

To support structured ethical reflection in applied settings, CIET researchers Dr Deepa Austin and Swati Ganesan introduced the Ethical Assessment Framework for IoT/AI solutions in Digital Health. The framework draws on their collaborative research with the Government of Karnataka and the World Economic Forum across domains including smart cities, healthcare, and agriculture.

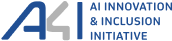

The framework was organised around four derived ethical values: Equity, Fairness, Trust, and Dignity. These dimensions draw on established scholarship in responsible AI and technology ethics

Figure 1: A Framework for Ethical Design: Four Core Dimensions (derived from Floridi et al. 2018)

Equity was defined in terms of ensuring equal opportunities for end-users across socio-economic and digital divides, as well as fair opportunities for diverse actors — including smaller organisations — to participate in governance and innovation processes. Equity also raises questions of rights, distribution of resources, and who benefits from technological interventions.

Fairness refers both to the absence of bias in system outcomes and to the intentions and assumptions embedded in system design. Transparency of data and methods, and the explainability of system behaviour to relevant stakeholders, are critical for enabling fairness and contestability.

Trust reflects end-user confidence in whether systems perform their intended functions safely and reliably. It depends on privacy protection, security, accountability mechanisms, and the ability of systems to remain answerable to affected stakeholders.

Dignity focuses on preserving meaningful agency and autonomy for both operational staff and end-users. It includes the ability to exercise informed choice, retain professional judgement, and avoid forms of technological control that erode identity, autonomy, or long-term wellbeing.

Rather than treating these values as abstract ideals, the workshop explored how they can guide concrete design and governance decisions.

Translating Ethics into Practice: A Lifecycle Approach

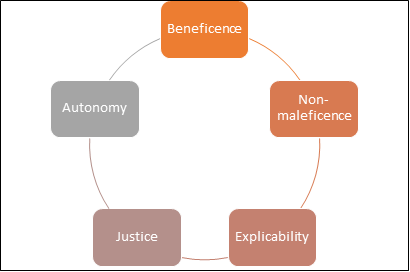

A key contribution of the workshop was the discussion of an ethical assessment framework based on a system lifecycle approach. Participants examined how ethical considerations emerge at different stages from Conceptualisation; Knowledge translation and Development; Service delivery environment; and Scalability and Sustainability

Figure 2: A system lifecycle approach to ethics in AI

Participants were grouped into developers and researchers where each group focused on:

- Mapping system functions across stakeholders, interdependencies, and target populations.

- Identifying ethically relevant questions at different stages of the system lifecycle.

- Categorising these questions within the four ethical dimensions.

- Reflecting on how ethical risks can be anticipated and mitigated during the design phase rather than only after deployment.

Photo 1: Researcher Swati Ganeshan presenting the framework on Dimensions for Ethical Design

This structured elicitation process encourages us to move beyond general principles and engage with context-specific trade-offs, operational constraints, and institutional responsibilities.

Designing for Socio-Technical Integration

The workshop reinforced that acknowledging existing human workflows, organisational practices, and governance arrangements is crucial for any ethical considerations. Design decisions related to data capture, automation thresholds, interface design, and system feedback loops shape usability, trust, and long-term sustainability.

Participants reflected on the importance of participatory engagement, iterative testing, and interdisciplinary collaboration in ensuring that systems remain aligned with real-world needs. Ethical design, in this sense, becomes an ongoing capability rather than a one-time compliance exercise.

Session 2: Learning Responsible AI (RAI) Through Play

In this second part of the workshop, participants explored the question of ethical system design through an immersive, game-based simulation called The RAI Health Quest.

Why a Game-Based Approach to Responsible AI?

Responsible AI concepts are often taught through policies, guidelines, and technical checklists. While necessary, these approaches can sometimes feel distant from the messy realities of deployment, where trade-offs, uncertainty, and institutional constraints shape outcomes.

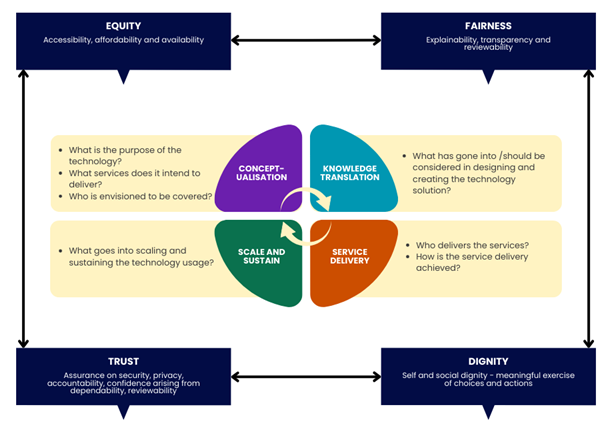

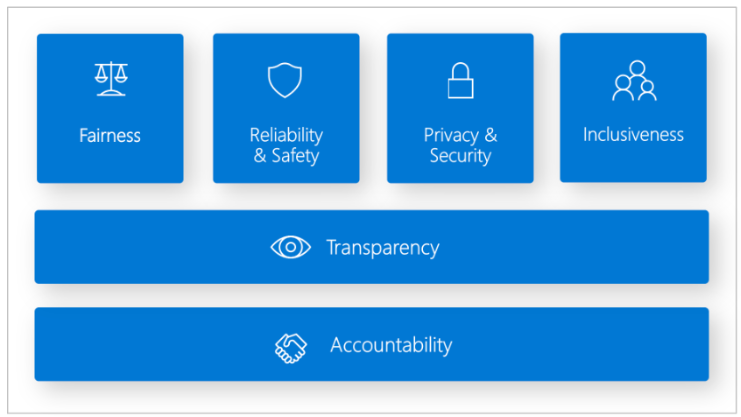

Figure 3: A framework for building AI systems based on six principles (Microsoft, 2025)

The RAI Health Quest used simulation and role play to surface these complexities. Participants played the role of development teams building a chatbot designed to support frontline health workers delivering maternal healthcare at the primary care level. The vision was to contribute toward a sustainable and responsible AI ecosystem, while helping frontline workers bridge everyday knowledge gaps in service delivery.

Photo 2: Participants engaging with the game on Responsible AI

The premise is simple but powerful: the excitement of innovation is real — but so are the stakes. Teams compete not only to build functionality, but to build responsibility into their systems.

RAI principles provide the ethical lens through which players must evaluate their design and governance choices. Rather than treating these principles as abstract ideals, the game forced teams to prioritise, negotiate, and sometimes compromise under resource constraints, mirroring real project environments. Participants were expected to continuously adapt their strategies. The competitive element also highlighted how market or institutional pressures can influence ethical decision-making. Importantly, the game encouraged interdisciplinary dialogue. Engineers and researchers must articulate assumptions, negotiate priorities, and reconcile technical feasibility with social responsibility.

Reflection as a Learning Tool

Both sessions concluded with structured reflections where participants discussed what surprised them, what trade-offs felt most difficult, and how their decisions evolved over time. Many participants noted that responsible AI is not simply about adding safeguards at the end of development, but about shaping decisions throughout the product lifecycle. The exercises also surfaced how stakeholder engagement, governance planning, and institutional constraints materially affect ethical outcomes. By simulating consequences rather than prescribing answers, they created a safe space for experimentation and critical reflection.

Implications for A4I’s Research and Capacity Building

The workshop contributed directly to A4I’s broader mission of advancing responsible, evidence-informed AI in public and social sectors. Insights from the sessions are informing:

- The integration of ethical assessment into project planning and evaluation.

- Interdisciplinary collaboration across engineering, social science, and policy research.

- Capacity building among research associates and students working on applied AI systems.

- Engagement with institutional partners on governance, accountability, and sustainability.

By embedding ethical reasoning into everyday research practice, A4I strengthens its ability to support trustworthy and socially responsive innovation.

Looking Ahead

As digital systems continue to expand across public infrastructure and service delivery, the ability to systematically assess ethical implications will become increasingly important for researchers, implementers, and policymakers alike. Frameworks that acknowledge complexity, contextual variation, and institutional dynamics provide practical pathways for responsible deployment.

Alongside this, interactive approaches like the RAI Health Quest help cultivate these capabilities. They align closely with A4I’s mission of advancing socially grounded, evidence-informed AI innovation — and contribute to building a community of practitioners equipped to design technologies that serve public value.

These conversations will continue at the upcoming AI for Impact Summit in Delhi, where stakeholders will engage on the future of AI in public systems, governance models, and inclusive innovation pathways.

At A4I, building impactful AI also means building ethical intelligence: the capacity to design, evaluate, and govern complex socio-technical systems in ways that advance public value.