The A4I Research Associates have been out traveling for on-site research for a total of 8 weeks in the last four months! Supported by the field managers at our partner organisations, Khushi Baby, Sikshana Foundation and Vision Empower, we travelled across Rajasthan and Karnataka, visiting different districts, to speak to people at primary health care centres (Rajasthan), schools for the blind and government schools (Karnataka).

The experience was exhilarating in parts. We rode through forests and open fields of sugarcane on the way to remote schools. We waited at empty bus stations at midnight, travelled in rickety old buses, and stayed in dingy hotels with paan-stained floors. We went to remote sub-centres, visited PHCs in remote areas with almost no transportation facility and weathered seasons of rain and no power or network to extreme cold weather in Rajasthan.

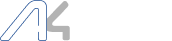

Some glimpses: North Karnataka Rotti meals that we had at a small family-run eatery, a school in rural coastal Karnataka

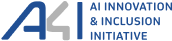

Through our on-site interactions with ASHAs in Rajasthan, public school teachers, and teachers of students with visual disabilities, we met many new people, listened to the things they had to say, and ate a lot of new foods. We share some of our learnings from this experience below.

1. The Importance of Considering Context:

We intended to find insights around the use of the AI-based technologies and how it affected participant knowledge practices; we expected complaints about the platform, bugs, digital literacy gaps, or mismatches with existing systems. We were mistaken.

The AI tools figured very little in the landscape of our participants’ experiences. Technology was, in essence, a very tiny part of a much broader ecosystem. To understand the ‘field’ better, we had to broaden our research and be willing to take in everything that the teachers and ASHA workers had to share with us. This meant extending our participant reach beyond the obvious: speaking to officials at government offices, visiting a Nali Kali classroom even though elementary education was not part of the intended cohort or visiting an ASHA’s house for an interview because the village sub-centre was in no state to be used.

From there, we could piece together an understanding of where the AI tools lay in the ecosystem. Starting with a broad picture and understanding sociopolitical contexts worked out much better for us than beginning our study with just the AI tools.

2. Reflecting on our Positionalities as Researchers

Our positionalities in the field shaped our interactions with research participants.

In Rajasthan, we noticed that the ASHAs were initially hesitant to engage with us. They were busy with numerous responsibilities from field visits and surveys, to managing multiple apps and administrative tasks. We were introduced by our NGO partner as representatives from the industry, there to ask them questions, which led them to assume that we were there to evaluate them. This intimidated them. They felt the pressure to respond in a certain way. As we interacted with them further, and as they understood our intent, they began to open up. They shared their frustrations about the constant need to learn new apps, manage different systems, and work under challenging conditions.

In Karnataka, too, teachers had to take time off from the few break periods they had, to speak with us. We came labelled with the terms ‘AI’ and ‘Microsoft’, being partnered with an NGO they were familiar with. That commanded a certain respect, interest, but also trust, encouraging an openness that we did not expect. Teachers at the blind schools were also incredibly open with us in sharing feedback, concerns, day-to-day challenges and classroom realities. Our engagement through the local implementing partner helped create a context of familiarity and trust making it easier for conversations to go from formal responses to perspectives that helped us better understand how existing systems work in practice.

A board saying AI Enabled School displayed at the school

With state bureaucracy, however, we noticed some hints of distrust. One official rightfully asked us: “Sure, you’ll come and do research, you’ll ask us questions, and you will get our input. But what will you do with it? What will you give us?”. Another official noticed our industry affiliation and pointed out that he did not want Big Tech extracting money from teachers’ and students’ data. These were all wonderful reminders that research like ours should never be extractive: while these are values that we already hold, such nudges are always welcome.

Interacting with each stakeholder needed us to understand our positions in context to theirs. Where did we come from? What were their backgrounds? What biases did they have, and what biases did we come with? The answers to these questions both closed and opened doors for us in our enquiry, and grappling with these has been an ongoing process in our work.

3. Responsibility — or the Conundrum of Doing Too Little vs. Too Much

The systems we’re studying are often broken.

When newly elected governments revise school curricula, even minor syllabus changes trigger the reprinting of textbooks. What is never accounted for, however, is the additional time, specialised infrastructure, and fragile supply chains required to produce Braille textbooks for students with vision impairment. With limited and often unreliable Braille printing facilities, these changes lead to significant delays leaving visually impaired students without timely access to learning materials and placing additional strain on their teachers. Schools see broken pipes, no water supply, no tiles in bathrooms, insufficient tables and chairs. Parents of visually impaired children hesitate to send their kids to school after seeing the poor infrastructure; other parents choose private schools instead. ASHAs must undergo training sessions in overcrowded rooms in harsh weather, for long hours with no breaks, and not enough technical support.

It became clear to us that while technology could help, it could not address the fundamental issue of systemic neglect. True change could happen only when we focused on strengthening the system itself and not merely by adding more tools.

On one hand, creating valuable change and impact in a thoroughly fragmented system seems impossible, and we are afraid that nothing we do will be enough to help the people we wish to help.

On the other hand, we know our participants are deeply frustrated, overworked, and underpaid. The indirect beneficiaries of the AI tools are also marginalised: children with disabilities, and women and children from low-income backgrounds. All of them carry very little power in the rigid hierarchies they are part of. The addition of ‘more tools’ brings a lot of stress to an already burdened system. Each tool carries tasks of data entry, tracking, managing errors, and handling repair, forcing them to take time away from their core tasks: education and healthcare. One ASHA expressed: “Time lagta hai maam seekhne mein, kya karein seekhna padta hai – kaam hi isme hi hota hai, ek seekhte hai tab tak dusra aa jata hai – yeh chor ke uspe lag jate hai, phir isko chalan bhool jate hai,” [“It takes time to learn ma’am, but what to do? We have to learn – our work is in this only, by the time we learn one, another comes up, then we have to leave this and get on that, but then we forget how to use this.”], referring to the constant updates and changes they face while trying to master new tools.

Knowing this, the weight of our work seems heavier. We realise that our work needs to be carried out sensitively, the suggestions we offer about our tools must be light-footed. We ought to go slow and avoid innovating for the sake of innovation. Our work on-site also thus reinforced the responsibility we carry as researchers in creating knowledge and informing innovation.

4. Despite everything: Hope

Despite the many challenges, there was also hope.

We met many people deeply committed to their work and supportive of their peers, even under difficult conditions. In Rajasthan, there were Medical Officers Auxiliary Nurse and Midwifes, and Community Health Officers who empathized with their colleagues, offering support that kept them going and encouraged mutual learning. Some ASHAs shared stories of how they managed to connect with remote villagers or helped people in need, despite having little to no support or appreciation for their work. In Karnataka, we found strong communities of donors and trustees for some blind schools who provide monetary support whenever needed. Public schools were staffed by incredibly motivated teachers and school heads who did their best for their students with the limited resources they had.

We even encountered government officials who, despite systemic hurdles, were doing their best to keep the system running.

A teacher proudly showing the achievements of his students in sports

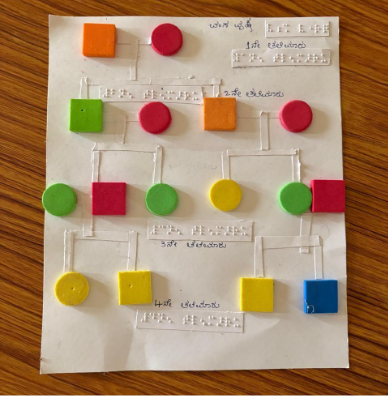

Tactile aids made by hand by Vision Empower school facilitators

In conversation with a government official, discussing on-ground realities

Our on-site interactions with NGO partners, ASHAs, and schoolteachers helped us make better sense of not just the technology, but also the networks and institutional contexts around the tools we were studying. As our research progresses, we hope to continue with sensitivity, using our findings towards systems that truly support the kind of essential frontline labour that ASHAs and schoolteachers do.

Written by: Aanchal, Santhosh, Sneha, and Sookthi